Archive

How not to protect your app

So, I went to Droidcon this week.

And to be honest, it disappointed me almost in every parameter: from content to catering.

I don’t go to many conventions, but compared to August penguin that costs about one tenth for a ticket, Droidcon was surprisingly low quality.

The agenda had not one but two presentations on how to “protect your app from hackers”, and unfortunately, both could be summed up in one word: obfuscate.

Obfuscation is the worst way to secure any software, and Android applications are no different in this regard. If anything, since smartphones today often contain more sensitive and valuable user data then PCs, it is even more important to use real security in mobile apps!

Obfuscation is bad because:

- It is difficult and costly to implement but relatively easy to break.

- It prevents, or at least significantly reduces your ability to debug your app and resolve user reported issues.

- It only needs to be broken once to be broken everywhere, always for any user.

- Fixing it once broken is almost impossible.

Basically, obfuscation is what security experts call “security by obscurity” which is considered very insecure.

Consider this: the entire Internet, including most banking and financial sites runs almost completely on open source software and standard, open, and documented protocols: Apache, NGINX, OpenSSL, Firefox, Chrome.

OpenSSL is particularly interesting, because it provides encryption that is good enough for the most sensitive information on the net, yet it is completely open.

Even the infamous “Dark Web” or more specifically the Tor network, is completely open source.

Truly secure software design does not rely on others not understanding what your code does.

Even malware writers, who’s entire bread and butter depends on hiding what their apps are doing, no longer rely on obfuscation but instead moved on to full blown code encryption and delivering code on demand from a server.

This is because obfuscated code was too easy for security researchers, and even fully automated malware scanners to detect.

If you want to learn more about this, follow security company blogs such as Semantek, Kaspersky and Checkpoint. Their researchers sometimes publish very interesting malware analysis showing the tricks malware writers use to hide their evil code.

Specifically bad advice

Now I would like to go over some specific advice given in one presentation, that I consider to be particularly bad, some of it to the point where I would call it “anti-advice”:

- Use reflection

- Hide things in “native”

- Hide data with protobufs or similar

- Hide code with ProGuard or similar

I listed these “recommendations” from most to least harmful.

If you are considering using any of these technics in your app, please read the following explanation before doing so, and reconsider.

1. Using reflection

Reflection is a powerful tool, but it was not designed to hide code.

If you use reflection to call a method in a class, anyone looking at a disassembled version of your app, or even running simple “strings” utility on it will still see all the method and class names.

You will gain nothing but loose any protection from bugs and crashes that compiler checks and lint tools normally provide.

You could go as far as scramble (or even fully encrypt) the strings holding the names of the classes and methods you call with reflection and only decipher them at run time.

But this takes long time to implement, is very error prone, and will make your app slower since it has a lot more work to do for a single, simple function call.

Stop and think carefully: what would a “hacker” analyzing your app gain from knowing what API you are calling?

The answer will always be: nothing, unless your app is very badly written!

Most security apps, like password managers, advertise exactly what encryption they are using so their customers would know how secure they are.

In a truly secure app, knowing what the app does, will not help a bad guy break it in any way.

I challenge anyone to give me an example in the comments of an API call that is worth hiding in a legitimate app.

2. Hide things in “native”

If you are not familiar with JNI and writing C and C++ code for Android, go read up on it, but not in order to protect your app!

Because Java (and Kotlin) still have performance issues when it comes to certain types of tasks (specifically, games and graphics related code), developers at Google created the Native Development Kit – NDK, to let you include native C and C++ code in your app that will run directly on the device processor and not the JVM (Dalvik / ART).

But just as with reflection, the NDK was not designed to hide your code from prying eyes.

And thus, it will not hide anything!

To many Java developers, particularly ones with no experiences or knowledge developing in native (to the hardware) languages like C, a SO binary file will look like complete gibberish even in a hex editor.

But, just because you don’t understand what you are looking at, does not mean someone trying to crack your app will have a hard time understanding it!

A so file is a standard library used on Linux systems for decades (remember: Android is running on top of the Linux kernel), so there are lots of tools out there to decompile and analyze them.

But your attacker probably won’t even need to decompile your so file. If all they are looking for is some string, like a hard-coded password or API token you put in your app, they can still see it with a simple “strings” utility, same as they would in your Java or Kotlin code. There is no magic here – all strings remain intact when compiling to native.

Also, any external functions or methods your native code calls will appear in the binary file as plain text strings – your OS needs to find them to call them, so they will be there, exposed just like in any other language!

But things can get worse: lets say you have some valuable business logic in your app, and you want to make it harder for hackers to decompile your code and see this logic.

It is true that when you compile Java source, a lot more information about the original code is preserved than when compiling C or C++.

But don’t be tempted by this, because you may leave your self even more exposed!

If a hacker really wants to run your code for his (or hers) nefarious purposes, then wrapping it up in a library that can easily be called from any app is like gift wrapping it for them.

And this is exactly what you will be doing if you put your code in a native SO file: you are putting it in an easy to use library!

Instead of decompiling your code and rewriting it in their app, the hacker can just take your SO file, and call its functions (that must be exposed to work!) from their own app.

They don’t need to know what your code does, they can just feed it parameters and get the result, which is what they really wanted in the first place.

So instead of hampering hacking, you make it easier by using the wrong tool, all the while giving your self a false sense of security.

4. Hide data with protobufs or similar

By now you should have noticed a pattern: one thing all these bad advice have in common is recommending the wrong tool for the job.

Protobufs is an excellent open source tool for data serialization.

It is not a security tool!

The actual advice given in the presentation was to replace JSON in server responses with protobufs in order to make the information sent by the server less readable.

But what security do you gain from this? If your server sends a reply like this:

{

"first_name" : "Jhon",

"last_name" : "Smith",

"phone" : "555-12345",

"email" : "jhon@email.com"

}

converting this structure to a protobuf will look something like this:

xxxxJhonxxxxSmithxxxx555-12345xxxjhon@email.com

Is that really hiding anything?

Protobufs are more compact then JSON, and they can be deserialized faster and easier than JSON but they also have some disadvantages: they are not as flexible as JSON.

It is hard to support optional fields with protobuffs and even harder to create dynamic or self describing objects.

If your app needs flexibility in parsing server replies, or if you have other clients, particularly web clients written with JS that access the same server API, JSON may be the better choice for you.

When deciding whether to use JSON or protobuffs, consider their advantages and disadvantages for your use case, DO NOT CONSIDER SECURITY!

They are both equally insecure, and you will need encryption (always use SSL!) and proper access validation (passwords, tokens, client certificates) if you want to keep your data safe.

4. Hide code with ProGuard or similar

This advice actually talks about the right tool for a change: ProGuard.

This is a tool Google ships with Android Studio, and it does two things: reduces the size of your code and resources after compilation, and slightly obfuscates your code.

This is not a bad tool, but it comes with a cost, and it won’t really give you protection from hackers.

It will rename your methods like getMySecretPassword() to a() but will that really stop anyone from doing anything bad?

At best, it will slow them down, but keep in mind that it will also cost you:

ProGuard has the side effect of rendering all stack traces useless and making debugging the app extremely difficult.

There is a way to mitigate this: you need to keep a special translation file for every single build of your app (because ProGuard randomizes its name mangling).

If you need to support users in production and don’t want to be helpless or work extra hard when they report a crash, you might want to give up on ProGuard.

Also keep in mind that you need to carefully tell ProGuard what not to obfuscate, since you must keep any external API calls, components declared in the manifest and some third party library calls intact, or your app will not run.

Remember – ProGuard will:

- Not keep any hardcoded strings safe.

- Not keep your user password safe if you store it as plain text in your app data folder.

- Not keep your communication safe if you do not use SSL.

- Not protect you from MITM attacks if you do not use certificate pinning.

ProGuard might make your final APK file smaller by getting rid of unused code and reducing length of class and methods names, but you should carefully consider the cost of this reduction you will pay when dealing with bugs and crashes.

I find it is usually just not worth the hassle.

And there are better tools now for reducing download size, such as App Bundles.

Summary

Messing with your code will never make your app more secure. It will not protect you from hackers.

Even if you do not want, or can not, release your app as open source, you still need to remember that trying to hide its code with obfuscation will cost you more then having your app reverse engineered.

The development, debugging, and user support costs can be as devastating as any hacks!

But, if you treat your code as though it is meant to be open, and make sure that even if a bad person can read and understand everything your app does they still can not get your users data or exploit your web server, then, and only then, will your app be truly secure.

And doing that is often easier and cheaper than trying to obfuscate your code or data.

P. S.

One of the presentations mentioned a phenomenon I was not familiar with: “App cloning”.

Apparently, if you publish an ad supported app, some bad people can take your app without your permission, replace your advertisement API keys with their own, and release the app to some unofficial app stores like the ones that are common in China (because Google Play is blocked there by the government).

This way, they will get ad revenue from your app instead of you.

But consider this: would you publish your app to these stores?

If your answer is “no”, then you are not losing anything!

You will never get any money from these users because they will never be able to install your original app, so any effort you put in defending against “cloning” will be a net financial loss to you.

Remember – as a developer, your time is money!

P. S. 2

Someone in the audience asked about Google API keys like the Google Maps API key.

Usually, it is bad practice to put API keys in plain text in the manifest of your app, because anyone can get them from there and use a paid API at your expense.

But this is not the case with Google API keys!

The reason Google tells you to put the key in the manifest, is because Google designed these API keys in such a way, that they will be useless to anyone but you, so stealing them is pointless.

This is a great example of a good security design: instead of relying on app developers to figure out how to distribute an API key to millions of users but keep it safe from hackers, Google tide the key to your signing certificate and your app id (package name).

When you create the API key, you must enter your certificate fingerprint and your package name.

Your private key – the one you use to sign your apps for release, is something most developers already keep very safe. There is never a reason to send it anywhere and it would never be included in the app itself.

It will stay safe on the developers computer.

And without this private key, the public API key will not work.

If it is used in an app signed by anyone else, even if that app fakes your app’s id, the API key will still be invalid.

This is how you secure apps!

Security risks or panic mongering?

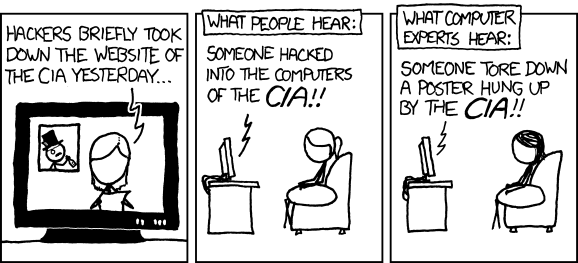

When you read about IT related security threats and breaches in mainstream media, it usually looks like this:

Tech sites and dedicated forums usually do a better job.

Last week, a new article by experts from Kaspersky Lab was doing the rounds on tech sites and forums.

In their blog, the researchers detail how they analyzed 7 popular “connected car” apps for Android phones, that allow opening car doors and some even allow starting the engine. They found 5 types of security flaws in all of them.

Since I am part of a team working on a similar app, a couple of days later this article showed up in my work email, straight from our IT security chief.

This made me think – how bad are these flaws, really?

Unlike most stuff the good folks at Kaspersky find and publish, this time it’s not actual exploits but only potential weaknesses that could lead to discovery of exploits, and personally, I don’t think that some are even weaknesses.

So, here is the list of problems, followed by my personal analysis:

- No protection against application reverse engineering

- No code integrity check

- No rooting detection techniques

- Lack of protection against overlaying techniques

- Storage of logins and passwords in plain text

I am not a security expert, like these guys, just a regular software developer, but I’d like to think I know a thing or two about what makes apps secure.

Lets start from the bottom:

Number 5 is a real problem and the biggest one on the list. Storing passwords as plain text is about the dumbest and most dangerous thing you can do to compromise security of your entire service, and doing so on a platform that gives you dedicated secure storage for credentials with no hassle whatsoever for your users, is just inexcusable!

It is true that on Android, application data gets some protection via file permissions by default, but this protection is not good enough for sensitive data like passwords.

However, not all of the apps on the list do this. Only two of the 7 store passwords unencrypted, and 4 others store login (presumably username) unencrypted.

Storing only the user name unprotected is not necessarily a security risk. Your email address is the username for your email account, but you give that out to everyone and some times publish it in the open.

Same goes for logins for many online services and games that are used as your public screen-name.

Next is number 4: overlay protection.

This one is interesting: as the Kaspersky researchers explain in their article, Android has API that allow one app to display arbitrary size windows with varying degrees of transparency over other apps.

This ability requires a separate permission, but users often ignore permissions.

This API has legitimate uses for accessibility and convenience, I even used it my self in several apps to give my users quick access from anywhere to some tasks they needed.

Monitoring which app is in foreground is also possible, but you would need to convince the user to set you up as an accessibility service, and that is not a simple task and can not be automated without gaining root access.

So here is the rub: there is a potential for stealing user credentials with this method, but to pull it off in a seamless way most users would not notice, is very difficult. And it requires a lot of cooperation from the user: first they must install your malicious app, then they must go in to settings, ignore some severe warnings, and set it up a certain way.

I am not a malware writer either, so maybe I am missing something, but it looks to me like there are other, much more convenient exploits out there, and I have yet to see this technique show up in the real world.

So if I had to guess – I’d say it is not a very big concern. Actually, if you got your app set up as accessibility service, you could still all text from device without the overlay trick, and I can’t think of a way to properly detect when a certain app is in use without this and without root.

No we finally get to the items on the list that aren’t really problems:

Number 3: root detection. Rooted device is not necessarily a compromised device. On the contrary – the only types of root you can possibly detect are the ones the user installed of his own free will, and that means a tech savvy user who knows how to protect his device from malware.

The whole cat and mouse game around root access to phones does more harm to security than letting users have official root access from the manufacturer, but this is a topic for a separate post.

If some app uses root exploit behind its users back, it will only be available to that app, and almost impossible to detect from another app, specially one that is not suppose to be a dedicated anti-malware tool.

Therefore, I see no reason to count this as a security flaw.

Number 2: Code integrity check. This is just an overkill for each app to roll out on its own.

Android already has mandatory cryptographic signing in place for all apps that validates the integrity of every file in the APK. In latest versions of Android, v2 of the signing method was added that also validates the entire archive as a whole (if you didn’t know this, APK is actually just a zip file).

So what is the point of an app trying to check its code from inside its code?

Since Android already has app isolation and signing on a system level, any malware that gets around this, and whose maker has reversed enough of the targeted app code to modify its binary in useful ways, should have no trouble bypassing any internal code integrity check.

The amount of effort on the side of the app developer trying to protect his app, vs the small amount of effort it would take to break this protection just isn’t worth it.

Plus, a bad implementation of such integrity check could do more harm then good, by introducing bugs and hampering users of legitimate copies of the app leading to an overall bad user experience.

And finally, the big “winner”, or is it looser?

Number 1 on the list: protection from reverse engineering.

Any decent security expert will tell you that “security by obscurity” does not work!

If all it takes to break your app is to know how it works, consider it broken from the start. The most secure operating systems in the world are based on open source components, and the algorithms for the most secure encryptions are public knowledge.

Revers engineering apps is also how security experts find the vulnerabilities so the app makers can fix them. It is how the information for the article I am discussing here was gathered!

Attempting to obfuscate the code only leads to difficult debugging, and increased chance of flaws and security holes in the app.

It can be considered an anti-pattern, which is why I am surprised it is featured at the top of the list of security flaws by some one like Kasperskys experts.

Lack of reverse engineering protection is the opposite of security flaw – it is a good thing that can help find real problems!

So there you have it. Two real security issues (maybe even one and a half) out of five, and two out of seven apps actually vulnerable to the biggest one.

So what do you think? Are the connected cars really in trouble, or are the issues found by the experts minor, and the article should have actually been a lot shorter?

Also, one small funny fact: even though the writers tried to hide which apps they tested, it is pretty clear from the blurred icons in the article that one of the apps is from Kia and another one has the Volvo logo.

Since what the researchers found were not actual vulnerabilities that can be exploited right away, but rather bad practices, it would be more useful to publish the identity of the problematic apps so that users could decide if they want to take the risk.

Just putting it out there that “7 leading apps for connected cars are not secure” is likely to cause unnecessary panic among those not tech savvy enough to read through and thoroughly understand the real implications of this discovery.

Android, Busybox and the GNU project

Richard Stallman, the father of the Free Software movement and the GNU project, always insists that people refer to some Linux based operating systems as “GNU/Linux”. This point is so important to him, he will refuse to grant an interview to anyone not willing to use the correct term.

There are people who don’t like this attitude. Some have even tried to “scientifically prove” that GNU project code comprises such a small part of a modern Linux distribution that it does not deserved to be mentioned in the name of such distributions.

Personally, I used to think that the GNU project deserved recognition for it’s crucial historical role in building freedom respecting operating systems, even if it was only a small part of a modern system.

But a recent experience proved to me that it is not about the amount of code lines or number of packages. And it is not a historical issue. There really is a huge distinction between Linux and GNU/Linux, but to notice it you have to work with a different kind of Linux. One that is not only stripped of GNU components, but of its approach to system design and user interface.

Say hello to Android. Or should I say Android/Linux…

Many people forget, it seems, that Linux is just a kernel. And as such, it is invisible to all users, advanced and novice alike. To interact with it, you need an interface, be it a text based shell or a graphical desktop.

So what happens when someone slaps a completely different user-space with a completely different set of interfaces on top of the Linux kernel?

Here is the story that prompted me to write this half rant half tip post:

My boss wanted to backup his personal data on his Android phone. This sounds like it should be simple enough to do, but the reality is quite the opposite.

In the Android security model, every application is isolated by having its own user (they are created sequentially and have names like app_123).

An application is given its own folder in the devices data partition where it is supposed to store its data such as configuration, user progress (for games) etc.

No application can access the folder of another application and read its data.

This makes sense from the security perspective, except for one major flaw: no 3rd party backup utility can ever be made. And there is no backup utility provided as part of the system.

Some device makers provide their own backup utilities, and starting with Android 4.0 there is a way to perform a backup through ADB (which is part of Android SDK), but this method is not designed for the average user and has several issues.

There is one way, an application on the device can create a proper backup: by gaining root privileges.

But Android is so “secure” it has no mechanism to allow the user to grant such privileges to an application, no matter how much he wants or needs to.

The solution of course, is to change the OS to add the needed capability, but how?

Usually, the owner of a stock Android device would look for a tool that exploits a security flaw in the system to gain root privileges. Some devices can be officially unlocked so a modified version of Android can be installed on them with root access already open.

The phone my boss has is somewhat unusual: it has a version of the OS designed for development and testing, so it has root but the applications on it do not have root.

What this confusing statement means is, that the ADB daemon is running with root privileges on the device allowing you to get a root shell on the phone from the PC and even remount the system partition as writable.

But, there is still no way for an application running on the device to gain root privileges, so when my boss tried to use Titanium Backup, he got a message that his device is not “rooted” and therefore the application will not work.

Like other “root” applications for Android, Titanium Backup needs the su binary to function. But stock Android does not have a su binary. In fact, it does not even have the cp command. Thats right – you can get a shell interface on Android that might look a little bit like the “regular Linux”, but if you want to copy a file you have to use cat.

This is something you will not see on a GNU/Linux OS, not even other Linux based OSs designed for phones such as Maemo or SHR.

Google wanted to avoid any GPL covered code in the user-space (i.e. anywhere they could get away with it), so not only did they not use a “real” shell (such as BASH) they didn’t even use Busybox which is the usual shell replacement in small and embedded systems. Instead, they created their own very limited (or as I call it neutered) version called “Toolbox”.

Fortunately, a lot of work has been done to remedy this, so it is not hard to find a Busybox binary ready made to run on Android powered ARM based device.

The trick is installing it. Instructions vary slightly from site to site, but I believe the following will work in most cases:

adb remount adb push busybox /system/bin adb shell chmod 6755 /system/bin/busybox adb shell busybox --install /system/bin

Note that your ADB must run as root on the device side!

The important part to notice here is line 3: you must set gid and uid bits on the busybox binary if you want it to function properly as su.

And no – I didn’t write the permissions parameter to chmod as digits to make my self look like a “1337 hax0r”. Android’s version of chmod does not accept letter parameters for permissions.

After doing the steps above I had a working busybox and a proper command shell on the phone, but the backup application still could not get root. When I installed a virtual terminal application on the phone and tried to run su manually I got the weirdest error: unknow user: root

How could this be? ls -l clearly showed files belonging to ‘root’ user. As GNU/Linux user I was used to more descriptive and helpful error messages.

I tried running ‘whoami’ from the ADB root shell, and got a similarly cryptic message: unknown uid 0

Clearly there was a root user with the proper UID 0 on the system, but busybox could not recognize it.

Googling showed that I was not the only one encountering this problem, but no solution was in sight. Some advised to reinstall busybox, others suggested playing with permissions.

Finally, something clicked: on a normal GNU/Linux system there is a file called passwd in etc folder. This file lists all the users on the system and some information for each user such as their home folder and login shell.

But Android does not use this file, and so it does not exist by default.

Yet another difference.

So I did the following:

adb shell # echo 'root::0:0:root:/root:/system/sh' >/etc/passwd

This worked like a charm and finally solved the su problem for the backup application. My boss could finally backup and restore all his data on his own, directly on the phone and without any special trickery.

Some explanation of the “magic” line:

In the passwd file each line represents a single user, and has several ‘fields’ separated by colons (:). You can read in detail about it here.

I copied the line for the root user from my PC, with some slight changes:

The second field is the password field. I left it blank so the su command will not prompt for password.

This is a horrible practice in terms of security, but on Android there is no other choice, since applications attempting to use the su command do not prompt for password.

There are applications called SuperUser and SuperSU that try to ask user permission before granting root privileges, but they require a special version of the su binary which I was unable to install.

The last field is the “login shell” which on Android is /system/sh

The su binary must be able to start a shell for the application to execute its commands.

Note, this is actually a symlink to the /system/mksh binary, and you may want to redirect it to busybox.

So this is my story of making one Android/Linux device a little more GNU/Linux device.

I took me a lot of time, trial and error and of course googling to get this done, and reminded me again that the saying “Linux is Linux” has its limits and that we should not take the GNU for granted.

It is an important part of the OS I use both at home and at work, not only in terms of components but also in terms of structure and behavior.

And it deserves to be part of the OS classification, if for no other reason than to distinguish the truly different kinds of Linux that are out there.

Solutions vs Products

I originally intended this blog to be about development, with programming tips, tricks, and maybe even following some open source project of mine, but for now, I just couldn’t find any suitable material of this kind to publish.

Most of the new stuff I learned recently was already well documented else were, and I did not want my blog to be a copy of a copy bringing no added value.

But I don’t want it to be strictly opinionated rants ether, so I decided to start a new series, which is something in between: technical examples (not necessarily code), that go to prove my strong opinion that Free and Open Source Software is better than closed source non free software.

I call this series: “Solution vs Products”.

In Free Software, developers always seek to provide a solution for a certain problem. Software solution that will fulfill a certain need. Very often, it is their own need, but that does not mean that others do not benefit greatly from the solution.

Companies, that build their business on Free and Open Source software like RedHat and Canonical, make money from providing solutions to their customers, not simply selling them products.

The difference, is not just a marketing slang. It is in the kinds of programs that are available, and the features these programs have. In this series, I will demonstrate my personal encounters with features of Free Software that proprietary software does not provide, and some, I believe can not provide, under its current business model.

But, rather than continuing to describe it, lets just jump to an example that will demonstrate what I am talking about:

Drivers, drivers, drivers…

One of the myths about GNU/Linux and Free operating systems in general, is that they don’t support a lot of hardware.

In plain folks talk “There ain’t no drivers for this thing…”

But reality is, that hardware support in Linux distributions is often better than in the latest version of Microsoft Windows. The myth is propagated by the fact that just about any piece of hardware you buy will have a disk with Windows drivers accompanying it, but no Linux drivers.

People don’t realize this is because such a thing is not needed.

Some time ago, I had a faithful old Pentium 4 2.8GHz computer with a simple graphics card based on Nvidia chip.

There was no driver problem for this card in Windows XP, and it was also recognized out of the box by Ubuntu 7.10, though it had to install the proprietary Nvidia driver to fully support it.

That, was actually less of a hassle than installing the driver for XP from the CD that came with the card, but since Ubuntu 7.10 is significantly newer then Windows XP, it can be forgiven.

One day, the card died (or fried, I am not sure which). Fortunately, I still had the manual for the motherboard, so I knew by the beep sounds my computer made that the fault was in the graphics card and not any other component.

I went to the nearest computer store and got a replacement card. It had the exact same Nvidia chip in it, but the card itself was from a different manufacturer then the old one.

When I plugged it in and booted up, Ubuntu worked as though nothing happened. The Nvidia driver was universal, and it didn’t care that I had a different card in, as long as it had a supported chip in it.

With XP however, the situation was not nearly as good. I had to boot up in “Safe Mode”, uninstall the old driver, then boot up in normal mode and install a different driver for the new card.

Yet another case that demonstrates this issue occurred to me when I bought a very cheap web camera as part of a bet.

The bet was simple: will it be recognized out of the box by Ubuntu? I said “yes” but some people doubted that was possible. Well, I did not have a web cam, and Office Depot were selling some dirt cheap model, so I bought it.

To be fair, I lost the bet. At the time (2008) to get a camera with that particular chip working on Ubuntu a kernel module had to be compiled.

Two years later, however, the module is now part of the official distribution, and the camera is recognized out of the box.

And what of Windows 7? Nothing. since the CD I got with the camera does not contain drivers for it, and since there is no way of identifying the cameras manufacturer (it carries no trademarks), it is useless for Windows user.

Fortunately, I am not a Windows user…

One last case of “driver issues” I keep running in to at work, is with Android devices.

These devices (mostly phones and tablets) use a system called Android Debug Bridge (ADB for short), to communicate with the PC to aid in developing software. Through ADB the developer can debug applications (duh!), read system logs, get shell access to the device and more.

When working on Windows, every individual Android device needs a special driver to be recognized for ADB connection. Even two different phones from the same manufacturer need separate drivers.

This drives a couple of Android developers I know crazy.

On Linux, on the other hand, no driver is necessary. The PC side ADB component can locate any ADB capable device connected to USB and communicate with it.

I do not know what exactly caused the driver architecture to be so drastically different between Windows and Linux. Perhaps it was a purely engineering decision.

But perhaps, it was the fact that much of the hardware support for Linux had to be achieved through reverse engineering due to lack of cooperation from the manufacturers, that brought about modules that support entire families of products and kernel that provides ease of access to peripheral hardware for user-space programs even without a kernel module.

Either way, we have here three small examples where Free Software makes life easy while proprietary software gives you a headache.

Next up: Emergency computer resurrection: a vital solution no proprietary software company could possibly provide.

Stay tuned!

![[FSF Associate Member]](https://i0.wp.com/static.fsf.org/nosvn/associate/fsf-11332.png)